Manage All Types of Testing in One Place with Perforce ALM

You want the highest quality product possible. To do that, you’ll need to run tests constantly. But manually creating, running, and tracking test cases slows you down and puts your product quality at risk.

With increasing emphasis on automated testing, tracking test results with non-traceable tools like spreadsheets just won't cut it anymore. That's where Perforce ALM’s test case management module comes in.

Create, execute, and track tests with ease. Use it as a standalone module for test management or as part of the Perforce ALM suite. Even pair it with your existing tools, including Jira for issue management and Jenkins for automated testing.

Streamline Test Case Management

Creating test cases with spreadsheets and documents is time-consuming. But with the test case management module in Perforce ALM, it's quick and easy.

What You Can Do with Perforce ALM Test Case Management

One Test Case Management Tool for Everything

Create. Organize. Test. Track.

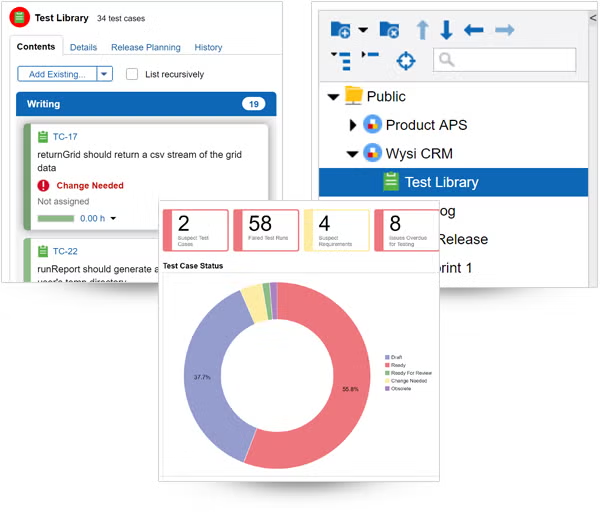

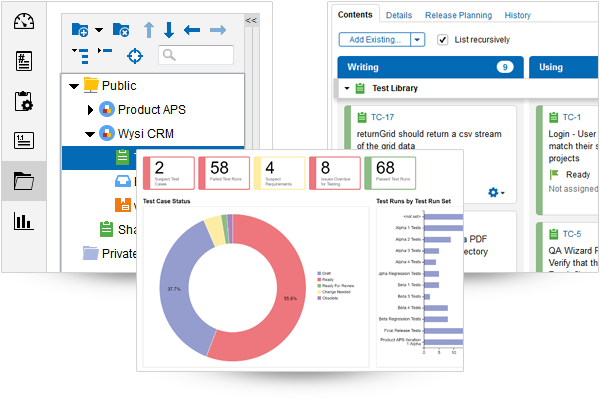

Do it all in a single test management tool. Today, testing often happens in silos, creating blind spots across development and QA teams. Those blind spots can introduce risk and product defects that aren't discovered until it's too late.

With Perforce ALM’s test case management module, you can manage all testing, including exploratory testing, in a single tool to ensure comprehensive test coverage. Functional. Validation. Performance. Regression. Acceptance. Safety. Security. In ALM, it’s easy, even in Agile environments.

You'll always know how much testing is complete and how much remains, so you can report your current test status to stakeholders at any time.

You'll spot issues and defects earlier in the cycle when it's easier to resolve them.

And you won't outgrow this test management tool. It can scale to handle the largest projects. What’s more, your entire test management strategy will live in one place, for single pane of glass visibility.

Better Test Coverage & Improved Efficiency

Full Coverage Testing

The most common question testing teams ask is, “What should we test?” Because most organizations lack a direct link between requirements and test case management. Perforce ALM’s test case management module enables Requirements-Driven Test Management (RDTM). You can create tests based on requirements and simplify the ‘definition of done’ (DoD) into a crystal clear test case.

You’ll get seamless test coverage, complete visibility of test results, and faster feedback of quality findings. Bugs and defects can be fixed earlier, so you can ensure business continuity, avoid waste of developer resources, and focus on innovation.

Reduce Manual Work

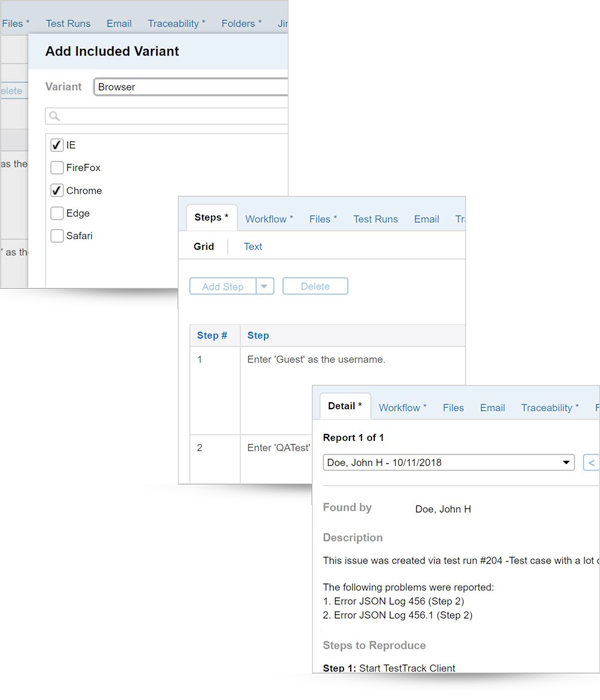

With ALM’s test case management module, reusing test steps is easy and lets you:

- Reduce manual work for testing.

- Increase testing efficiency.

- Create consistency across tests and projects.

Write one test and use it for multiple systems and browsers. Group related tests in suites to streamline planning for everyone. Reuse test cases in multiple automation suites to further increase testing efficiency.

A Test Management Tool With Full Traceability

Get Full Visibility Up and Downstream

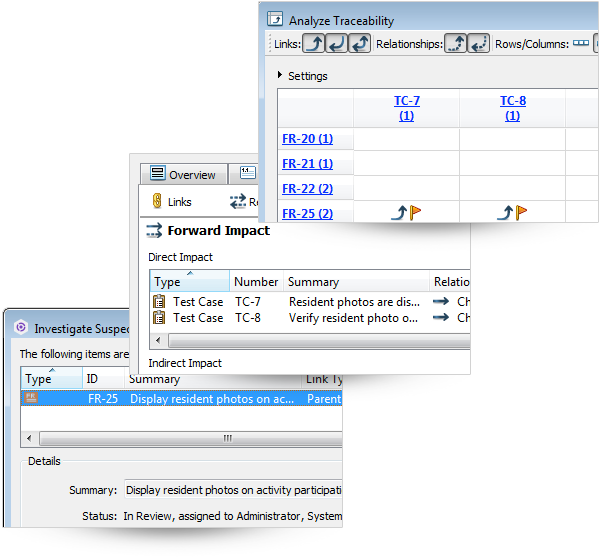

Understand the relationship between requirements, test cases, and issues. Trace the issue when tests fail. When requirements change, you’ll know to adjust your test cases. And you’ll know to make new tests when issues arise.

Perforce ALM’s test case management module creates automated, continuous traceability, so you can connect the dots. It even creates an instant traceability matrix.

So, you'll be able to:

- Understand how changes impact your requirements and test cases.

- Trace changes made in source code to a requirement in order to resolve a defect.

- Maintain and regulate requirements by requiring sign-offs on each test step.

Easily Integrate Test Case Management With Your Existing Tools

Need to Track Changes or Know the Reason Behind a Change?

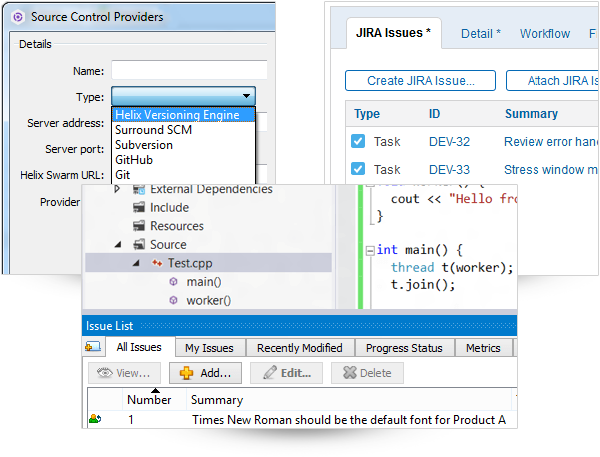

Chances are, Perforce ALM integrates with your favorite version control provider. After all, Perforce makes two of them — P4 (formerly Helix Core) and Surround SCM.

Using Automated Testing Tools?

Perforce ALM’s test case management module supports automated testing tools such as Jenkins, QA Wizard Pro, HP QuickTest Pro (UTF), and others. It also integrates with popular IDEs, so you can create, update, and close out issues without leaving home.

Perforce ALM builds a bridge to your continuous integration/continuous delivery (CI/CD) pipeline. Regardless of your automated testing tool, you can automatically send test results back to ALM and associate the results with test cases for complete traceability.

Need to Automate Processes?

With our API, you can automate processes, build custom solutions, and exchange data between ALM’s test case management module and other applications.

Plus, our professional services team is always ready to help with custom tool integrations.

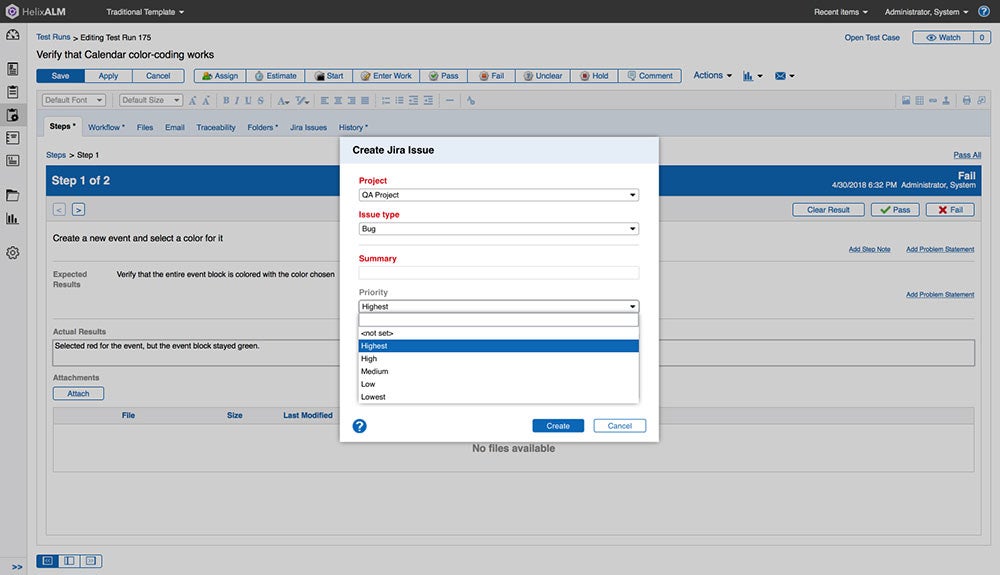

A Test Management Tool for Jira

Keep Using Jira But Get the Test Management You Need For Traceability

Using Jira to track issues and manage tasks? It’s meant for bug tracking, not for test management. That’s where Perforce ALM’s test case management module comes in. It can serve as your system of record on top of Jira.

Perforce ALM fully integrates and syncs seamlessly with Jira. You can easily create tests in ALM based on Jira issues. This allows you to get the structured test management you need while still using Jira.

No matter which tool you’re in, it’s easy to track issues, test cases, and test runs. You’ll always know what issues have been resolved, which test cases are approved, and which test runs have passed or failed.

See How It Works

Watch a demo of the full Perforce ALM suite to see powerful test case management in action.

Try Test Case Management for Free

Get started with a free 30-day trial of Perforce ALM to see how you can streamline test case management and gain end-to-end traceability across the entire product lifecycle.