Work Beyond Repo and File Limits

Large teams comprised of artists, developers, and engineers face limits when using Git alone. File size limits, repo size limits, and challenges due to lack of granular security controls emerge sooner than you’d think — especially for teams working with loads of binary files — and that’s where P4 comes in. The P4 Platform is built to handle the large files and project repos common in game engine (or real-time 3D engine) workflows — and it even offers a visual library your art teams can use to preview, review, and get feedback on their assets with P4 DAM (formerly Helix DAM).

Git with Perforce P4

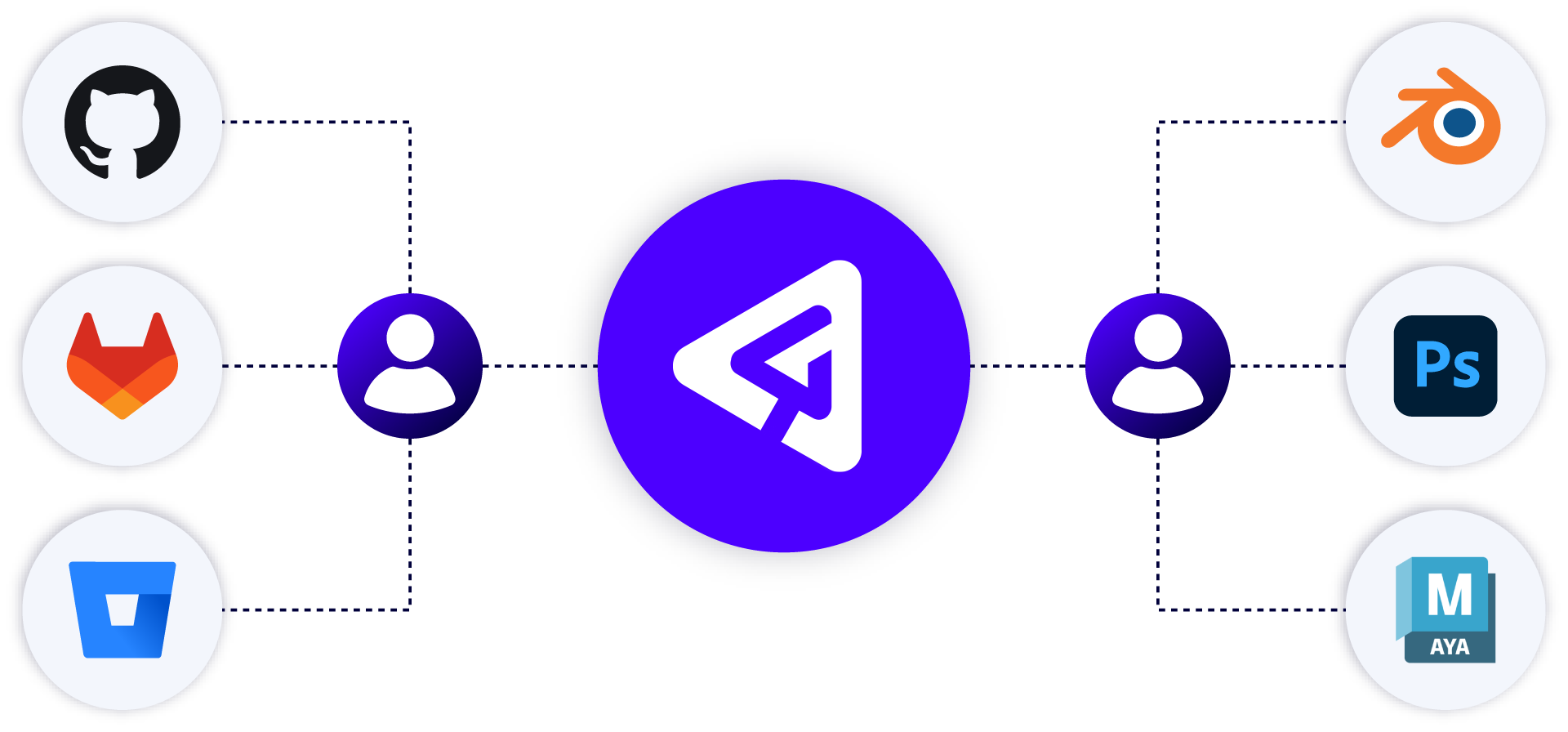

The P4 Platform provides one source of truth, with multiple ways to see and maintain it. For teams that need to use Git, our free P4 Git Connector provides a secure path for your developers to use their preferred Git tools (including GitHub, GitLab, and Bitbucket), while your art and design teams use a version control solution that won’t slow your studio down.

With the P4 Git Connector, consolidate Git code with all your digital assets in P4 to establish a unified source of truth for builds and enable a comprehensive disaster recovery strategy.

Why Teams Using Git Trust P4

Advanced Branching Models with Built-in Visualization

Managing workflows with multiple vendors, contractors, and remote teams can get complex. P4’s advanced branching models (via Streams) allow you to orchestrate the relationship with users and files with a level of control you can’t get anywhere else.

File-level (and IP address-specific) Permissions

Protect your most valuable IP and give collaborators access to only the files they need. Only P4 offers granular access control down to the file level and by IP address.

Version Control Solutions for Art and Design

Let your creators version by dragging and dropping their files into our free companion app, P4 Sync or through our intuitive digital asset management system, P4 DAM.

Large File size and Repo Handling

P4 is designed to handle large assets over 100MB, project repos over 10TBs, and global, multi-site topologies. It’s why P4 is at the center of development across industries—from gaming and VFX, to semiconductor and automotive.

P4 is the Industry Standard

Trusted by

19/20

Top AAA Game Dev Studios

82%

Rated P4’s ability to scale as “Best in Class”.*

100%

Realized a return on their investment within 2 years.*

Trusted by

9/10

Top Semiconductor companies

*Survey data gathered from 2023 customer survey.

“P4 has been a great addition to our software development suite. The advanced mapping features have greatly sped up our development workflows.”

Install P4 Git Connector

Gain a single source of truth for your builds by getting started with our P4 Git Connector for free on P4.

Get Started with P4

You can get started with P4 for free for up to 5 users and 20 workspaces.